anotherRealiti

https://www.cslasha.com/metaplex/realiti_store.html

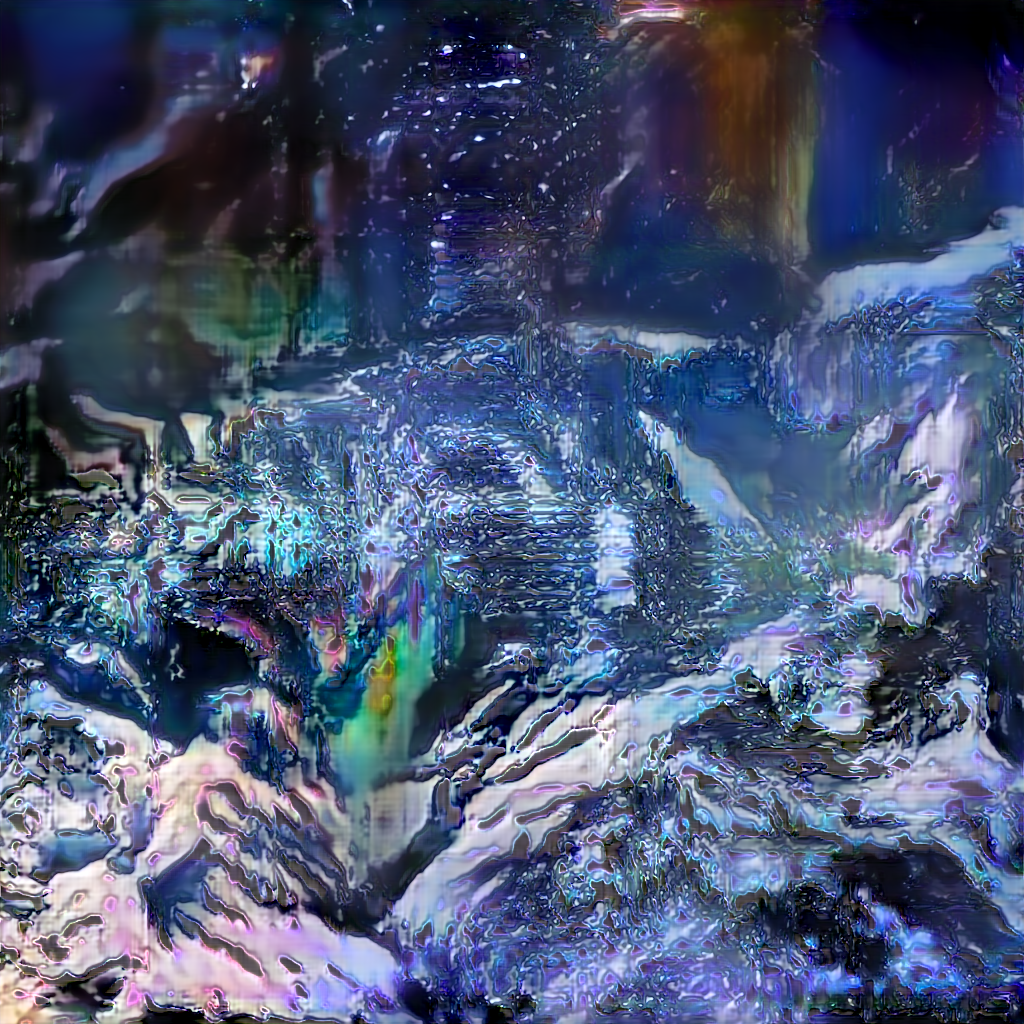

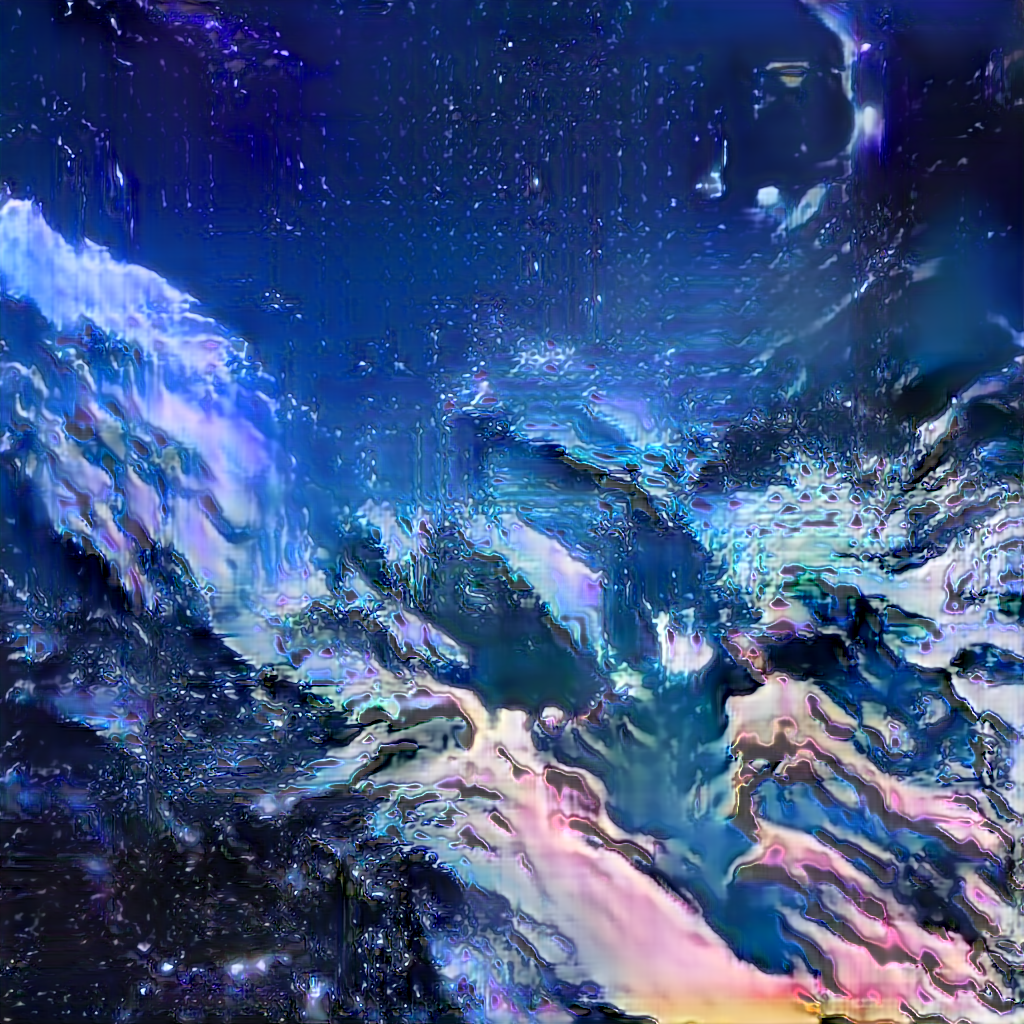

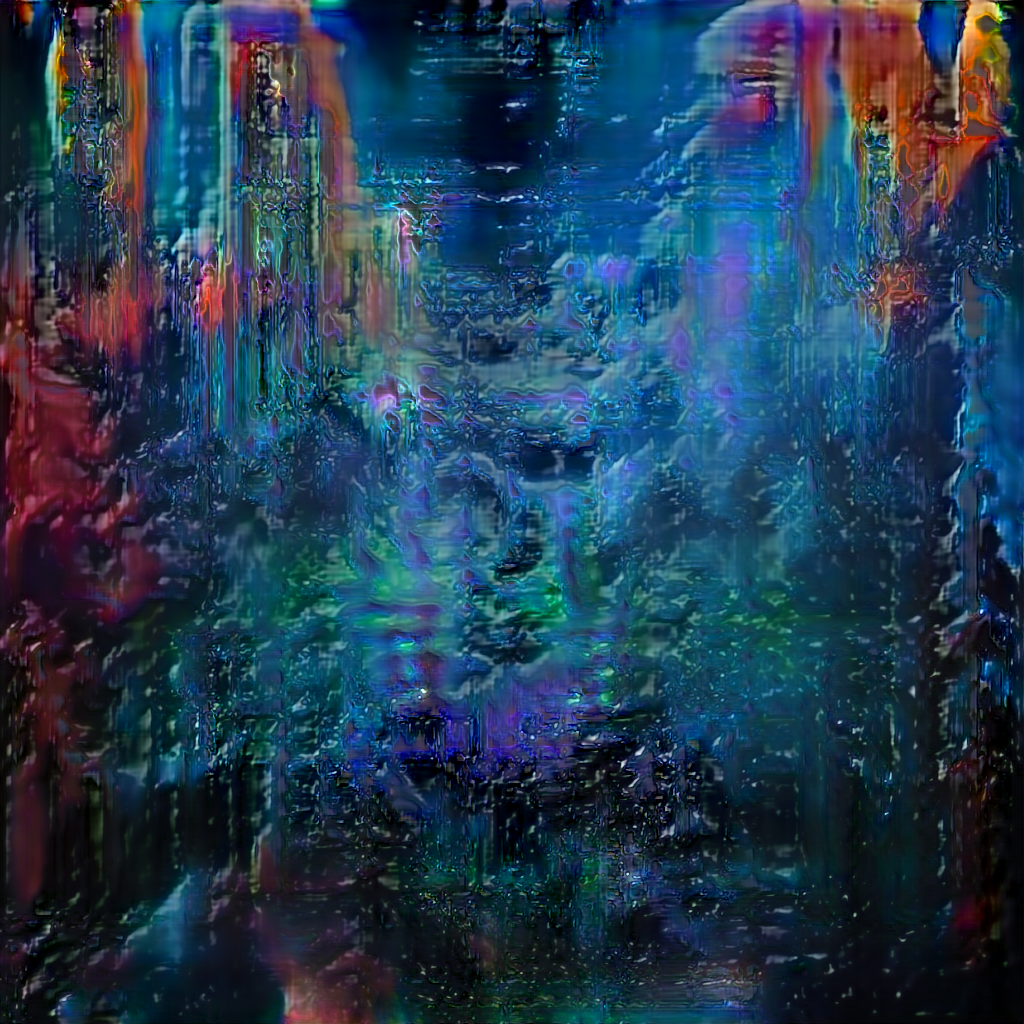

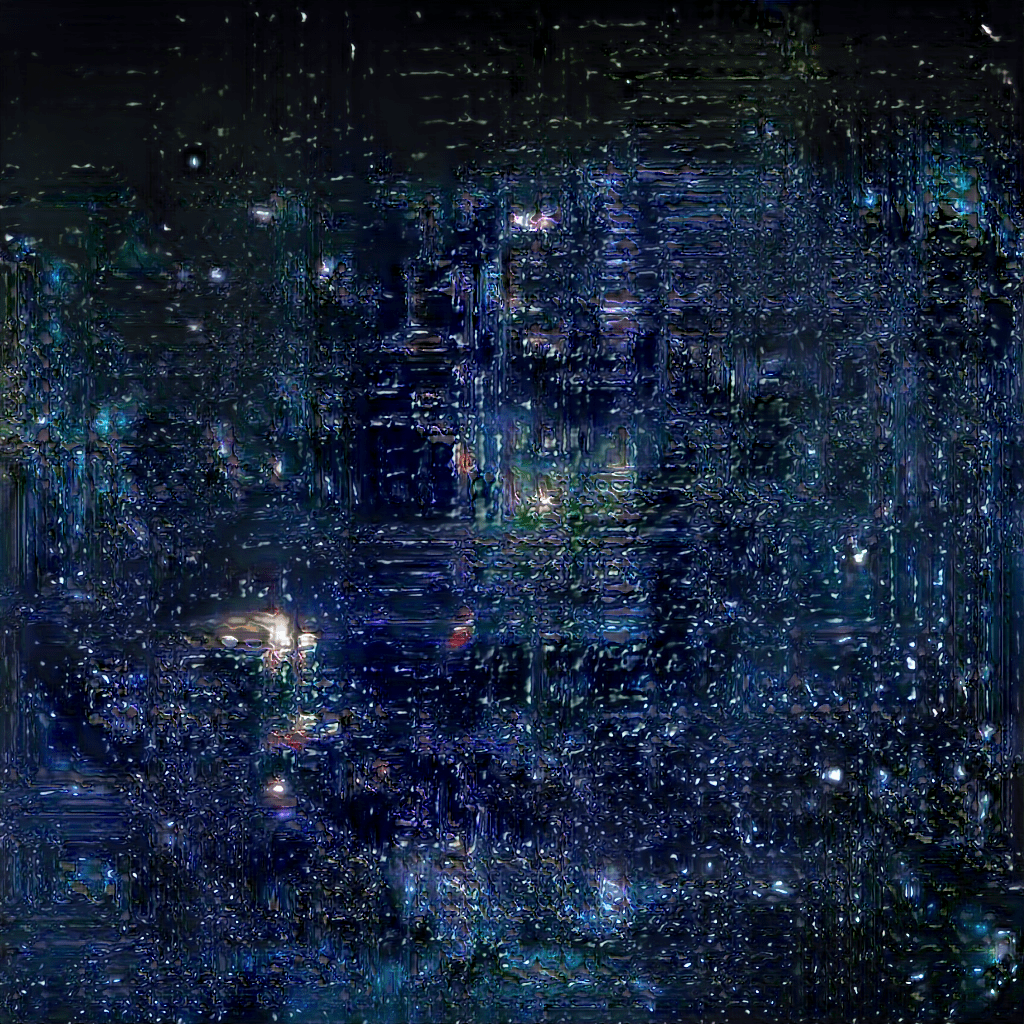

During 2018, when working on a project called c l a i r e [a highly self-indulgent project titled after the Grimes track Realiti initially but turned to something else almost entirely], we experimented with the idea of a software given the input of some video would run an object detection algorithm, scrape as much as possible image from google with those keywords + related images, add to its dataset and train a GAN [generative adversarial network]. The main idea was to do this over and over again until the generated images would become totally unrecognisable but for this example we used the lyrics of the track along with the first iteration of the Google image scrape. Therefore, a bit unconventionally, the dataset was an amalgam of various classes of photos, such as mountains, cityscapes along with the frames from the video of Realiti itself. We found the output of these quite holographic in terms of style and almost expressive compared to few other projects we saw that rely on GANs.

Here are some examples of the output;

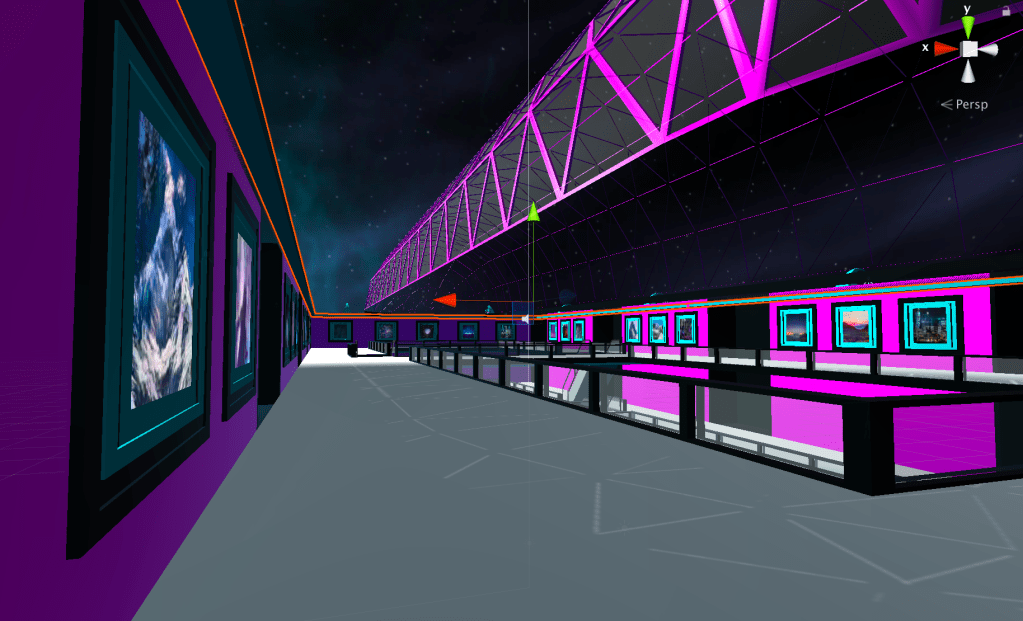

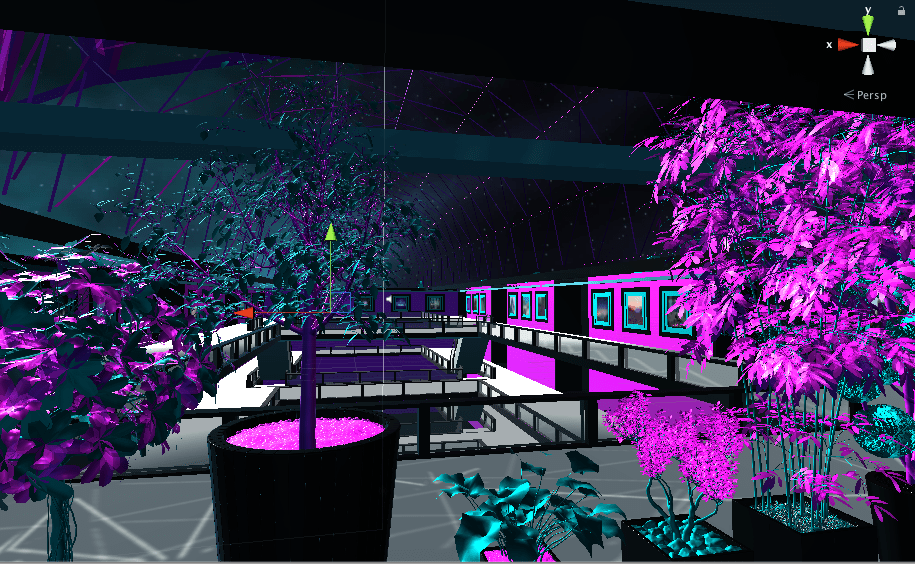

The generated image files by the model are currently purchasable as ERC-721 tokens [each token stores the RGB matrix of the specific image file] from our web-store or the virtual exhibition on the third floor of the M Ξ T A P L Ξ X Mall. The exhibition contains a selection from these paintings and there are a few improvements we have to make such as scraping the metadata of the image file from the API and displaying the price, owner’s hash below the painting. Also, a direct integration of the transaction within the WebGL build would be possible if the webpage is rendered on a surface within the build.

You can purchase the paintings here;

https://www.cslasha.com/metaplex/realiti_store.html

The current state of the store utilises the OpenSea distribution but it is in the top of our to-do-list to re-create the store from scratch.

You can visit the exhibition at the third floor of the Mall;

https://www.cslasha.com/metaplexconstruct